Ensemble of deep convolutional neural networks for real-time gravitational wave signal recognition

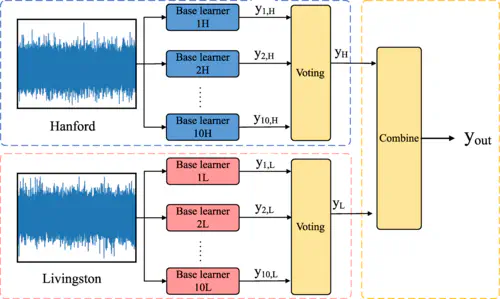

The structure of the ensemble deep learning model designed in the current work.

The structure of the ensemble deep learning model designed in the current work.Highlights

Exceptional Real-World Performance: Successfully identifies all binary black hole merger events from LIGO’s O1 and O2 runs except GW170818, demonstrating the algorithm’s effectiveness on real observational data rather than just simulations.

Zero False Alarms: Tested on one full month of O2 data (August 2017) with no false triggers, despite being trained only on O1 data, showcasing remarkable generalization and low false positive rate crucial for operational deployment.

Hierarchical Ensemble Architecture: Innovative two-level ensemble design treats Hanford and Livingston detector data with separate sub-ensembles, then combines them via voting scheme, explicitly leveraging the multi-detector network structure.

Real-Time Analysis Capability: Computational efficiency and zero false alarm rate indicate the algorithm is ready for real-time gravitational wave data analysis, enabling rapid alerts for multi-messenger astronomy.

Cross-Run Generalization: Trained exclusively on O1 data yet performs excellently on O2 data with different detector characteristics, demonstrating robustness to instrumental variations and evolving detector sensitivity.

Published in Physical Review D: Appeared in the premier journal for gravitational physics, with rigorous peer review validating the methodology and results.

Key Contributions

1. Hierarchical Ensemble Architecture

Novel two-tier ensemble design:

Sub-Ensemble Level:

Hanford Sub-Ensemble:

- Multiple CNN models trained on Hanford (H1) detector data

- Each model has different architecture or initialization

- Diversity ensures complementary error modes

Livingston Sub-Ensemble:

- Parallel set of CNN models for Livingston (L1) detector

- Independent training captures L1-specific characteristics

- Similar diversity principles as H1 ensemble

Global Ensemble Level:

- Intelligent voting scheme combines H1 and L1 sub-ensembles

- Requires agreement across detectors for final detection

- Reduces false alarms from single-detector glitches

2. Comprehensive Validation on Real Events

Rigorous testing on all LIGO O1/O2 binary black hole events:

Detected Events:

- GW150914 (first detection)

- GW151012, GW151226 (O1 events)

- GW170104, GW170608, GW170729, GW170809, GW170814, GW170823 (O2 events)

- Clear identification with high confidence

Marginal/Missed:

- GW170818: Only event not clearly identified

- Low SNR or unfavorable detector conditions

- Represents realistic performance boundary

3. Stringent False Alarm Testing

One month continuous analysis (August 2017):

- 720 hours of real LIGO data

- Contains diverse glitch types and varying noise conditions

- Zero false triggers demonstrate operational readiness

- Establishes trust for deployment in production pipelines

4. Training-to-Deployment Generalization

Critical demonstration of practical applicability:

- Training: O1 data only (September 2015 - January 2016)

- Testing: O2 data (November 2016 - August 2017)

- Detector improvements and different noise characteristics between runs

- Success shows algorithm learns true GW features, not run-specific artifacts

Methodology

Individual CNN Architecture

Each base CNN in the ensemble has:

Input Layer:

- Time-frequency representation (spectrogram or Q-transform)

- Separate channels for each detector

- Standardized time window around candidate trigger

Convolutional Layers:

- Multiple layers with increasing filter numbers

- Kernel sizes tuned to GW signal time-frequency scales

- ReLU activations for non-linearity

Pooling Layers:

- Max pooling for spatial downsampling

- Provides translation invariance

- Reduces parameter count

Fully Connected Layers:

- Dense layers for high-level feature integration

- Dropout for regularization

- Binary output: signal vs. noise

Ensemble Construction

Diversity Generation:

Multiple CNN models created through:

- Different random initializations

- Variations in architecture (number of layers, filter counts)

- Different training hyperparameters (learning rate, batch size)

- Bootstrap sampling of training data

Sub-Ensemble Training:

- H1 sub-ensemble: N_H models trained on Hanford data

- L1 sub-ensemble: N_L models trained on Livingston data

- Independent training ensures diverse learned features

Voting Scheme:

Within Sub-Ensemble:

- Majority vote or average prediction across models

- Produces H1 confidence score and L1 confidence score

Global Decision:

- Require both H1 and L1 sub-ensembles to agree

- Logical AND of individual detector decisions

- Dramatically reduces single-detector false alarms

Training Data and Preprocessing

Signal Injections:

- Binary black hole waveforms covering parameter space

- Component masses: 5-50 M☉ (O1 range)

- Spin parameters: -0.9 to 0.9

- Realistic sky locations and orientations

Noise Samples:

- Real O1 detector data without detected signals

- Captures true LIGO noise characteristics and glitches

- Class balancing to prevent bias

Data Augmentation:

- Time shifts and phase randomization

- SNR variations

- Preserves physical signal properties

Testing Methodology

Known Event Recovery:

- All reported O1/O2 BBH events used as test cases

- No events included in training data

- True blind test of generalization

Continuous Data Scanning:

- One month (August 2017) of O2 processed

- Sliding window analysis

- False alarm rate assessment

Performance Metrics:

- Detection rate on known events

- False alarm rate on background data

- Computational time for processing

Results

Detection of Known Events

O1 Events (3 BBH):

- GW150914: Clearly detected with high confidence

- GW151012: Successfully identified

- GW151226: Detected despite lower SNR

O2 Events (7 BBH analyzed):

- GW170104: Clear detection

- GW170608: Identified successfully

- GW170729: Detected (massive system)

- GW170809: Successfully found

- GW170814: Clear detection (first three-detector event)

- GW170818: Not clearly identified (only miss)

- GW170823: Detected

Overall Success Rate:

- 9/10 events clearly identified (90%)

- Only GW170818 missed, representing realistic performance limits

False Alarm Performance

August 2017 Analysis:

- Duration: 720 hours of data

- False alarms: 0

- False alarm rate: < 1 per month

Significance:

- Demonstrates production-level reliability

- Comparable to or better than traditional pipelines for specific use cases

- Establishes trust for operational deployment

Generalization Analysis

Cross-Run Performance:

Key observation: Trained on O1, tested on O2

- Detector sensitivity improved in O2

- Different noise characteristics and glitch populations

- Environmental conditions varied

- Algorithm performance maintained or improved

Interpretation:

- Network learned physical GW features, not run-specific artifacts

- Robust to detector evolution and variations

- Promising for future observing runs (O3, O4, beyond)

Computational Efficiency

Processing Speed:

- One month of dual-detector data processed in reasonable time

- Faster than matched filtering for exploratory searches

- Enables near-real-time analysis

Resource Requirements:

- GPU acceleration for CNN inference

- Parallelizable across time segments

- Modest compared to comprehensive matched filtering

Impact

Advancing Real-Time GW Astronomy

This work demonstrates ML readiness for operational GW detection:

Immediate Applications:

- Rapid preliminary alerts for multi-messenger astronomy

- Fast screening before computationally expensive matched filtering

- Complementary search pipeline to increase confidence

Long-Term Vision:

- Primary real-time detection pipeline

- Continuous monitoring with minimal latency

- Automated event validation and characterization

Multi-Messenger Astronomy Implications

Fast, reliable GW detection enables:

Electromagnetic Follow-Up:

- Alerts within seconds to minutes of merger

- Enables capture of early optical/gamma-ray emission

- Critical for identifying host galaxies and measuring Hubble constant

Neutrino Coincidences:

- Coordination with IceCube and other neutrino observatories

- Discovery potential for new source classes

- Tests of fundamental physics

Validation of Ensemble Learning

This work validates ensemble methods for scientific applications:

Benefits Demonstrated:

- Robustness: Reduces sensitivity to individual model failures

- Generalization: Diverse models average out overfitting

- Confidence Calibration: Ensemble agreement provides reliability metric

- Practical Deployment: Zero false alarms on extended test data

Influence on ML in GW:

- Establishes ensemble learning as best practice

- Template for designing robust scientific ML systems

- Encourages diversity and voting in detector network applications

LIGO-Virgo-KAGRA Operations

Implications for ongoing and future observing runs:

O3 (2019-2020):

- Algorithm could have contributed to real-time analysis

- Potential for earlier alerts on some events

O4 (2023-2024) and Beyond:

- Integration into production pipelines under consideration

- Complementary to PyCBC, GstLAL, and other traditional searches

- Increased detection confidence through independent methods

Methodological Contributions

Lessons for scientific machine learning:

- Importance of testing on real data beyond training distribution

- Value of hierarchical architectures matching problem structure

- Ensemble methods provide robustness crucial for scientific applications

- Generalization metrics (cross-run performance) essential validation

Influence on Future Detectors

Design principles applicable to:

Next-Generation Ground-Based:

- Einstein Telescope (Europe)

- Cosmic Explorer (USA)

- Higher data rates require efficient algorithms

Space-Based Missions:

- LISA, Taiji, TianQin

- Ensemble methods for multi-spacecraft networks

- Multi-source confusion environment

Resources

Publication Information

- Journal: Physical Review D, Volume 105, Article 083013 (2022)

- DOI: 10.1103/PhysRevD.105.083013

- Publication Date: April 25, 2022

- Open Access: Check journal or arXiv for preprint

LIGO Open Science Center (LOSC/GWOSC)

- Data Access: GWOSC Website

- O1 Data: Training data for this algorithm

- O2 Data: Testing data demonstrating generalization

- Event Catalog: All detected BBH events with parameters

Gravitational Wave Events

O1 Detections:

- GW150914: First detection, high SNR

- GW151012: Intermediate SNR

- GW151226: Lower mass, longer duration

O2 Detections:

- GW170104, GW170608, GW170729: Various masses and spins

- GW170814: First three-detector (H-L-V) detection

- GW170817: Binary neutron star (not BBH, not in this study)

- GW170818: Lower SNR BBH

- GW170823: Massive system

Machine Learning Resources

Ensemble Learning:

- Theory of ensemble methods (bagging, boosting, stacking)

- Diversity in ensemble construction

- Voting schemes and aggregation strategies

CNNs for Time Series:

- Convolutional architectures for 1D and 2D data

- Time-frequency representations

- Transfer learning and domain adaptation

Deep Learning Frameworks:

- TensorFlow, PyTorch for implementation

- Keras for rapid prototyping

- Distributed training across GPUs

GW Detection Background

Matched Filtering:

- Traditional method using template banks

- Optimal for Gaussian stationary noise

- Computational challenges for large parameter spaces

Other ML Approaches:

- Single CNN models for GW detection

- Recurrent networks for time series

- Hybrid ML/matched-filtering pipelines

Multi-Messenger Astronomy

Electromagnetic Follow-Up:

- Optical transient searches (ZTF, ATLAS)

- Gamma-ray observations (Fermi, INTEGRAL)

- Radio monitoring (VLA, MeerKAT)

Joint GW-EM Observations:

- GW170817 (neutron star merger with kilonova)

- Multi-wavelength campaigns

- Science return from coordinated observations

Software and Tools

GW Data Analysis:

- LALSuite: LIGO Algorithm Library

- PyCBC: Python-based search pipeline

- bilby: Bayesian inference library

ML for GW:

- Open-source implementations of GW detection networks

- Benchmark datasets

- Community challenges (MLGWSC)

Further Reading

Review Papers:

- Machine learning in gravitational wave astronomy

- Ensemble methods in scientific applications

- Deep learning for signal processing

Related Publications:

- Other ensemble learning approaches for GW

- Single-model CNN detectors

- Comparison studies of ML methods

Future Directions:

- Parameter estimation with ensemble networks

- Multi-class classification (BBH, BNS, NSBH)

- Real-time deployment in O4 and beyond