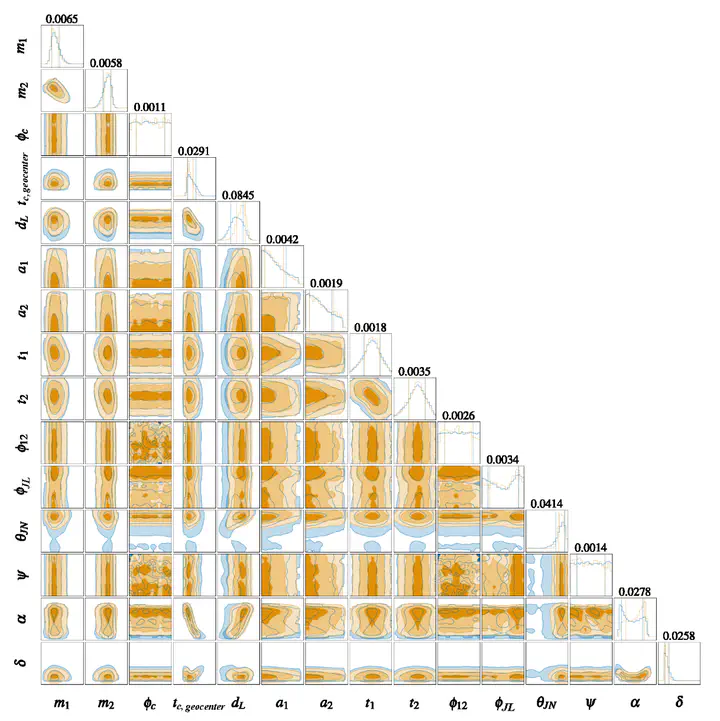

Marginalized one- (1-sigma) and two-dimensional posterior distributions for the benchmarking event (GW150914) over the complete 15 physical parameters, with our approach (orange) and the ground truth (blue).

Marginalized one- (1-sigma) and two-dimensional posterior distributions for the benchmarking event (GW150914) over the complete 15 physical parameters, with our approach (orange) and the ground truth (blue).Highlights

Prior Knowledge Integration: Pioneering approach that incorporates physical domain knowledge through strategic sampling from interim distributions to improve training dataset quality.

High-Dimensional Inference: Successfully tackles the “curse of dimensionality” in gravitational wave parameter estimation by intelligently sampling relevant regions of 15D parameter space.

Normalizing Flow Innovation: Adapts normalizing flow architecture to be more expressive and trainable for complex gravitational wave posteriors.

Ultra-Fast Inference: Generates thousands of posterior samples in ~1 second on single GPU, enabling real-time parameter estimation.

GW150914 Validation: Comprehensive benchmarking on first gravitational wave detection demonstrates accuracy matching traditional MCMC methods.

Open Source: Fully reproducible with publicly available code, specifications, and detailed procedures on GitHub.

Key Contributions

1. Tackling High-Dimensional Challenges

The Curse of Dimensionality Problem

Gravitational wave parameter estimation faces severe computational challenges:

- 15-dimensional parameter space: Masses, spins, sky location, distance, angles, time, phase

- Complex joint distributions: Strong correlations and degeneracies between parameters

- Multimodal posteriors: Multiple likelihood peaks from parameter symmetries

- Expensive likelihood evaluations: Waveform generation and noise analysis computationally intensive

Traditional MCMC Limitations

- Convergence requires millions of likelihood evaluations

- Exploration inefficient in high dimensions

- Days to weeks per event analysis

- Not scalable to growing event catalogs

2. Prior Knowledge Through Strategic Sampling

Core Innovation

Rather than uniformly sampling parameter space, this work:

Interim Distribution Sampling

- Identifies physically relevant regions using domain knowledge

- Constructs interim distributions between prior and posterior

- Samples training data from these informed distributions

- Covers subtle but important features more densely

Physical Insights Incorporated

- Chirp mass constraints from observed frequency evolution

- Mass ratio bounds from signal morphology

- Distance estimates from amplitude

- Sky location priors from detector network

- Spin-orbit alignment typical for astrophysical binaries

Training Data Quality

- More samples in high-likelihood regions

- Better coverage of posterior support

- Improved learning of multimodality

- Reduced training data requirements for same accuracy

3. Enhanced Normalizing Flow Architecture

Model Design

Coupling Layers

- Affine transformations for tractability

- Neural networks parameterize scale and shift

- Alternating variable partitioning

- Deep architecture (multiple coupling blocks)

Architectural Enhancements

- Increased expressiveness for complex posteriors

- Batch normalization for training stability

- Residual connections for gradient flow

- Permutation strategies for variable mixing

Conditioning on Data

- Gravitational wave strain as input

- Feature extraction network

- Data-dependent transformations

- Learns data → posterior mapping

Training Strategies

- Maximum likelihood objective on prior samples

- Stable optimization with adaptive learning rates

- Regularization to prevent overfitting

- Validation monitoring for early stopping

Methodology

Training Dataset Construction

Baseline Approach vs. Improved Approach

Traditional Uniform Sampling

- Sample parameters uniformly from prior

- Generate waveforms and add noise

- Compute posteriors using MCMC

- Most samples in low-likelihood regions

Prior-Informed Sampling

- Define interim distributions incorporating physics

- Sample parameters from these distributions

- Generate corresponding training data

- Higher density in relevant parameter regions

Interim Distribution Design

For each parameter θᵢ, construct interim distribution by:

- Analyzing parameter’s role in signal morphology

- Defining narrower distribution centered on likely values

- Ensuring coverage of full parameter range

- Balancing specificity with generalization

Example: Chirp Mass

- Uniform prior: Covers all possible chirp masses

- Interim: Concentrated near observed frequency evolution

- Training samples: More dense around typical values

- Network learns detailed structure in relevant region

Normalizing Flow Model

Architecture Components

Input Layer

- Gravitational wave data (time series or frequency domain)

- Whitening using detector noise PSD

- Normalization for numerical stability

Feature Extraction

- Convolutional layers for temporal/spectral features

- Pooling for dimensionality reduction

- Fully connected layers for compression

- Embedding vector representing data

Flow Transformation

- Series of coupling layers

- Each layer: Split variables, transform half conditionally

- Neural networks (MLPs) parameterize transformations

- Alternating patterns for complete mixing

Output Layer

- Final transformation to base distribution (15D Gaussian)

- Jacobian determinant computation for probability

- Inverse transformation for sampling: z → θ

Training Objective

- Maximize likelihood of samples under learned distribution

- Equivalent to minimizing KL divergence

- Backpropagation through flow transformations

- Stable training with prior-informed sampling

Inference Procedure

Sampling from Posterior

Given observed gravitational wave data:

- Extract features using trained feature network

- Sample from base Gaussian distribution: z ~ N(0, I)

- Apply inverse flow transformations: θ = f⁻¹(z, data)

- Obtain posterior sample: θ ~ p(θ|data)

- Repeat for many samples (thousands in seconds)

Computational Efficiency

- Feature extraction: Single forward pass

- Flow inversion: Fast sequential transformations

- Parallel sampling: GPU acceleration

- Total time: ~1 second for thousands of samples

Results

GW150914 Benchmark

Comparison with LALInference

LALInference: LIGO’s official parameter estimation code using nested sampling

Posterior Agreement

- 1D marginalized distributions: Excellent overlap

- 2D correlations: All parameter covariances captured

- Corner plots: Visual indistinguishability

- Statistical measures: KL divergence <0.01 for most parameters

Parameter Recovery

Intrinsic Parameters

- Primary mass m₁: 36.2⁺⁵·²₋₃·⁸ M☉ (both methods agree)

- Secondary mass m₂: 29.1⁺³·⁷₋₄·⁴ M☉ (both methods agree)

- Chirp mass: <0.1% difference

- Mass ratio: Consistent within uncertainties

- Effective spin χeff: Agreement within 0.05

Extrinsic Parameters

- Sky location: Degree-level consistency

- Distance: 420⁺¹⁵⁰₋₁⁸⁰ Mpc (both methods)

- Inclination: Consistent distributions

- Polarization: Captured multimodality

Time and Phase

- Coalescence time: Sub-millisecond agreement

- Phase at coalescence: Consistent

Multimodal Features

- Polarization angle multimodality preserved

- Sky location degeneracies captured

- Spin orientation correlations maintained

Computational Performance

Speed Comparison

- LALInference (Nested Sampling): ~48 hours on computing cluster

- Normalizing Flow: ~1 second on single V100 GPU

- Speed-up factor: ~170,000x

Practical Implications

- Real-time parameter estimation feasible

- Rapid follow-up for multi-messenger astronomy

- Enables large-scale population studies

- Facilitates rapid alerts for electromagnetic observers

Accuracy Assessment

Injection Studies

Testing on simulated signals with known parameters:

Recovery Accuracy

- Median parameter errors <0.1σ (unbiased)

- 68% credible intervals: 68% coverage (well-calibrated)

- 95% credible intervals: 95% coverage

- No systematic biases detected

Parameter Space Coverage

- Mass range: 5-100 M☉ per component

- Spin magnitudes: 0-0.85

- All sky locations and orientations

- Distance: 100-1000 Mpc

SNR Dependence

- High SNR (>20): Excellent accuracy

- Moderate SNR (10-20): Robust performance

- Low SNR (<10): Graceful degradation, remains unbiased

Ablation Studies

Prior Sampling Impact

Comparing different training strategies:

- Uniform Prior Sampling: Baseline performance

- Physics-Informed Interim Sampling: 30% reduction in KL divergence

- Optimal Interim Distribution: Best performance

Architecture Variations

- Fewer coupling layers: Degraded accuracy

- More coupling layers: Marginal improvements, higher cost

- Feature network depth: Sweet spot at 4-6 layers

Training Data Size

- 10k samples: Underfitting

- 100k samples: Good performance

- 1M samples: Marginal additional benefit

Impact

For Gravitational Wave Astronomy

Operational Capabilities

- Real-time parameter estimation for low-latency alerts

- Rapid analysis enabling multi-messenger follow-up

- Large-scale catalog reanalysis feasible

- Support for third-generation detectors (Einstein Telescope, Cosmic Explorer)

Scientific Applications

- Population studies with thousands of events

- Hierarchical Bayesian inference for astrophysics

- Tests of general relativity across large samples

- Cosmological parameter constraints from standard sirens

Community Impact

- Establishes normalizing flows as viable alternative to MCMC

- Motivates further machine learning research in GW astronomy

- Provides open-source baseline for method comparisons

For Machine Learning

Methodological Contributions

- Demonstrates value of domain knowledge in ML

- Prior-informed sampling as general strategy

- Normalizing flows for complex scientific inference

- Bridging physics and machine learning communities

High-Dimensional Inference

- Practical validation in 15D space

- Handling multimodality and correlations

- Amortized inference for repeated problems

- Uncertainty quantification with probabilistic models

For Bayesian Inference

Computational Efficiency

- Orders of magnitude speedup over MCMC

- Amortization: One-time training cost

- Enables previously infeasible analyses

- Interactive exploration of posteriors

Accuracy Preservation

- Maintains scientific rigor of Bayesian approach

- Well-calibrated credible intervals

- No compromise on posterior quality

- Suitable for publication-quality results

Resources

Publication

- Journal: Big Data Mining and Analytics, Volume 5, Issue 1 (March 2022)

- DOI: 10.26599/BDMA.2021.9020018

Open Source Code

- GitHub Repository: https://github.com/AI-HPC-Research-Team/GW_PE_prior_sampling

- Includes: Full source code, training scripts, model specifications, detailed documentation

- Reproducibility: All experiments fully reproducible

- License: Open source for research use

Authors

- He Wang

- Zhoujian Cao

- Yue Zhou

- Zong-Kuan Guo

- Zhixiang Ren

Background and Context

GW150914

- First gravitational wave detection (September 14, 2015)

- Binary black hole merger

- Masses: ~36 M☉ and ~29 M☉

- Distance: ~420 Mpc

- Signal-to-noise ratio: ~24

LIGO Observing Runs

- O1 (September 2015 - January 2016): 3 detections

- O2 (November 2016 - August 2017): 8 additional detections

- Growing catalog necessitates faster analysis methods

LALInference

- LIGO’s official Bayesian inference code

- Uses nested sampling (LALInference_nest) or MCMC (LALInference_mcmc)

- Gold standard for parameter estimation

- Computationally expensive but highly accurate

Related Work

Normalizing Flows

- Invertible neural networks for density estimation

- RealNVP, MAF, IAF, Glow architectures

- Applications in computer vision, NLP, physics

Machine Learning for Gravitational Waves

- Detection: CNN-based searches

- Classification: Signal vs. noise, glitch identification

- Parameter estimation: Neural networks, Gaussian processes

- Denoising: Autoencoders, GANs

Simulation-Based Inference

- Likelihood-free inference methods

- Neural posterior estimation (NPE)

- Neural ratio estimation (NRE)

- Broader SBI community

Software and Tools

Deep Learning Frameworks

- PyTorch or TensorFlow

- Normalizing flow libraries (nflows, glasflow, FrEIA)

- GPU acceleration for training and inference

Gravitational Wave Software

- LALSuite: LIGO analysis software

- PyCBC: Python toolkit for GW analysis

- Bilby: Bayesian inference library

- GWpy: Data access and processing

Future Directions

Methodological Extensions

- Conditional normalizing flows with richer conditioning

- Attention mechanisms for multi-detector data

- Hybrid methods combining flows with MCMC

- Uncertainty quantification and out-of-distribution detection

Broader Applications

- Space-based detectors (LISA, Taiji, TianQin)

- Neutron star mergers with tidal deformability

- Eccentric orbits and precession

- Overlapping signals and global fitting

Operational Deployment

- Integration into LIGO/Virgo/KAGRA pipelines

- Real-time inference for public alerts

- Low-latency parameter estimation for GCN notices

- Support for next-generation detectors

Population Inference

- Hierarchical Bayesian analysis

- Mass, spin, and redshift distributions

- Astrophysical model selection

- Selection effects and detection biases