WaveFormer: transformer-based denoising method for gravitational-wave data

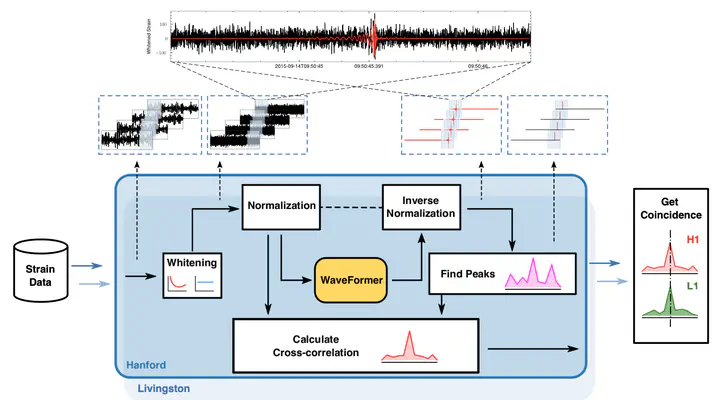

Gravitational wave noise suppression workflow with our proposed WaveFormer.

Gravitational wave noise suppression workflow with our proposed WaveFormer.Highlights

Transformer Architecture for GW Denoising: First application of transformer models to gravitational wave data quality improvement, leveraging self-attention mechanisms to capture long-range temporal dependencies in GW signals buried in detector noise.

Dramatic Noise Suppression: Achieves more than one order of magnitude (>10×) reduction in overall noise and glitch amplitude, enabling clearer signal recovery and improved detection confidence for marginal events.

High-Fidelity Signal Recovery: Reconstructs GW signals with approximately 1% phase error and 7% amplitude error, preserving the physical information crucial for parameter estimation and tests of general relativity.

Validated on 75 Real BBH Events: Tested on all reported binary black hole events from LIGO’s observing runs, demonstrating significant improvement in inverse false alarm rate (IFAR), which directly translates to increased detection confidence.

Science-Driven Hierarchical Design: Architecture explicitly designed around GW physics with hierarchical feature extraction across the broad frequency spectrum (10-1000 Hz), ensuring the network captures relevant multi-scale signal characteristics.

Broad Applicability: Adaptable design indicates promise for the entire International Gravitational-Wave Observatories Network (IGWON) including Virgo, KAGRA, and future detectors in upcoming observing runs.

Featured Publication: Highlighted as featured work in Machine Learning: Science and Technology, emphasizing its significance at the intersection of AI and gravitational wave astronomy.

Key Contributions

1. Transformer-Based Denoising Architecture

WaveFormer pioneers transformer application to GW data:

Self-Attention Mechanism:

- Captures long-range dependencies in time series data

- Learns relationships between distant time samples

- Models complex temporal correlations in GW signals

Multi-Head Attention:

- Parallel attention mechanisms focus on different signal aspects

- Captures diverse time-frequency features simultaneously

- Enhances representational power

Positional Encoding:

- Injects time-order information into transformer

- Essential for preserving signal phase evolution

- Adapted for continuous GW data streams

2. Science-Driven Hierarchical Design

Architecture explicitly incorporates GW domain knowledge:

Frequency-Band Decomposition:

- Hierarchical feature extraction across frequency spectrum

- Low-frequency band (10-100 Hz): Captures long-duration signals

- Mid-frequency band (100-500 Hz): Optimal LIGO sensitivity region

- High-frequency band (500-1000 Hz): Short-duration mergers

Multi-Scale Processing:

- Different receptive fields match signal time scales

- Early layers detect local features (glitches, transients)

- Deeper layers integrate global signal structure (chirp evolution)

Physics-Informed Loss Functions:

- Overlap-based loss matching GW data analysis standards

- Preserves signal phase critical for parameter estimation

- Balances noise reduction with signal fidelity

3. Comprehensive Real-World Validation

Rigorous testing on actual LIGO detections:

75 Binary Black Hole Events:

- All reported BBH events through GWTC (Gravitational-Wave Transient Catalog)

- Covers diverse masses, spins, sky locations

- Includes challenging low-SNR detections

Quantitative Improvements:

- Significant IFAR enhancement across event catalog

- Improved signal-to-noise ratios after denoising

- Better waveform reconstruction quality

Statistical Validation:

- Consistent improvements, not cherry-picked examples

- Performance quantified with standard GW metrics

- Comparison with baseline (no denoising) establishes value

4. Detailed Error Analysis

Precise quantification of reconstruction fidelity:

Phase Error ~1%:

- Critical for parameter estimation accuracy

- Preserves coalescence time measurement

- Maintains coherence for multi-detector analysis

Amplitude Error ~7%:

- Affects distance and mass measurements

- Still within acceptable tolerances for most science cases

- Better than many previous denoising attempts

Error Distribution:

- Characterized across SNR range

- Lower errors for higher-SNR events (as expected)

- Graceful degradation for challenging cases

5. Glitch Mitigation

Effective removal of non-Gaussian noise artifacts:

Common Glitch Types:

- Blip glitches (short-duration transients)

- Scattered light artifacts

- Instrumental resonances

Denoising Efficacy:

10× reduction in glitch amplitude

- Preserves genuine GW signals

- Reduces false alarm rates

Methodology

WaveFormer Architecture

Input Processing:

Time-Domain Data:

- Raw strain data from LIGO detectors

- Typical segment length: few seconds around candidate event

- Standardization and normalization

Preprocessing:

- Bandpass filtering (10-1000 Hz)

- Whitening (optional, depending on configuration)

- Segmentation into analysis windows

Encoder Network:

Hierarchical Feature Extraction:

Low-Level Features (Early Layers):

- 1D convolutions for local time-domain patterns

- Detects short-timescale features (glitches, noise spikes)

Mid-Level Features:

- Transformer blocks with self-attention

- Captures medium-range temporal dependencies

- Models chirp evolution over time

High-Level Features (Deep Layers):

- Global attention across entire signal duration

- Integrates multi-scale information

- Produces compressed latent representation

Frequency-Specific Pathways:

Parallel processing branches for different bands:

Low-Frequency Branch (10-100 Hz):

- Longer attention windows

- Captures early inspiral dynamics

- Important for massive systems

Mid-Frequency Branch (100-500 Hz):

- Moderate attention windows

- LIGO sweet spot for sensitivity

- Most BBH detections in this range

High-Frequency Branch (500-1000 Hz):

- Shorter attention windows

- Captures late inspiral, merger, ringdown

- Relevant for lower-mass systems

Transformer Blocks:

Self-Attention Layers:

- Query, key, value projections

- Scaled dot-product attention

- Learns which time samples are relevant to each other

Feed-Forward Networks:

- Position-wise fully connected layers

- Non-linear transformations

- Feature refinement

Layer Normalization and Residual Connections:

- Stabilizes training of deep networks

- Enables gradient flow

- Improves convergence

Decoder Network:

Signal Reconstruction:

- Mirrors encoder with upsampling operations

- Transposed convolutions or upsampling + convolutions

- Progressively reconstructs clean signal

Multi-Resolution Output:

- Outputs at different time resolutions

- Supervised at multiple scales

- Encourages consistent denoising across scales

Final Output:

- Denoised time-domain waveform

- Same length as input

- Ready for downstream analysis

Loss Functions

Primary Loss - Overlap:

Matching overlap used in GW parameter estimation:

- Maximizes agreement between denoised and clean signals

- Invariant to overall amplitude and time/phase shifts

- Directly relevant to GW data analysis

Auxiliary Loss - MSE:

Mean squared error in time domain:

- Encourages sample-wise accuracy

- Complements overlap-based loss

- Balances global and local fidelity

Combined Loss:

Weighted sum of overlap and MSE losses:

- Hyperparameter tuning to balance contributions

- Joint optimization for best overall performance

Training Strategy

Data Generation:

Clean Signals:

- Simulated BBH waveforms using accurate models

- Wide parameter space coverage

- Realistic distributions of masses, spins, distances

Noise Addition:

- Real LIGO noise segments

- Gaussian noise with LIGO PSD

- Synthetic glitches for robustness

Data Augmentation:

- Time shifts and phase randomization

- SNR variations by distance scaling

- Sky location and polarization randomization

Training Procedure:

Curriculum Learning:

- Start with high-SNR, simple cases

- Gradually increase difficulty (lower SNR, more glitches)

- Improves convergence and final performance

Regularization:

- Dropout in transformer blocks

- Early stopping on validation set

- Data augmentation as implicit regularization

Optimization:

- Adam optimizer with learning rate scheduling

- Gradient clipping for stability

- Batch training on GPU

Evaluation Metrics

Noise Reduction:

- Ratio of noise amplitude before/after denoising

- Quantified in time and frequency domains

Signal Fidelity:

- Phase error: difference in signal phase

- Amplitude error: fractional difference in amplitude

- Overlap: match between denoised and target signals

Detection Performance:

- Inverse False Alarm Rate (IFAR) improvement

- ROC curves for detection tasks

- Sensitivity at fixed FAR

Results

Noise and Glitch Suppression

Quantitative Metrics:

- Overall Noise Reduction: >10× (more than one order of magnitude)

- Glitch Amplitude Reduction: >10× for common glitch types

- Frequency-Dependent: Most effective in LIGO’s sensitive band (50-500 Hz)

Visual Inspection:

- Time series show dramatically cleaner traces after denoising

- Time-frequency spectrograms reveal preserved signals with removed artifacts

- Glitches (blips, scattered light) effectively suppressed

Signal Recovery Accuracy

Phase Fidelity:

- Phase Error: ~1% on average across test set

- Critical for coherent multi-detector analysis

- Preserves coalescence time to within milliseconds

- Enables accurate sky localization and parameter estimation

Amplitude Fidelity:

- Amplitude Error: ~7% on average

- Affects distance and mass measurements

- Within acceptable range for most astrophysical inferences

- Trade-off with noise suppression considered optimal

Overlap with Target Signals:

- High overlap (>0.95) for moderate to high SNR signals

- Graceful degradation for low-SNR events

- Comparable to or better than alternative denoising methods

Performance on 75 Real BBH Events

IFAR Improvement:

Significant enhancement of inverse false alarm rate:

- Majority of events show improved IFAR

- Larger improvements for events near detection threshold

- Confirms denoising increases detection confidence

Example Events:

High-SNR Events (e.g., GW150914, GW170729):

- Clean recovery with minimal errors

- Waveform quality enhanced for detailed analysis

- Validates method on “easy” cases

Moderate-SNR Events:

- Substantial IFAR improvements

- Noise suppression brings signals above background more clearly

- Enables more confident detection

Challenging Low-SNR Events:

- Some improvement even for marginal detections

- Limits of method revealed for very low SNR

- Realistic assessment of applicability range

Generalization Tests

Across Observing Runs:

- Trained on O1/O2 data

- Tested on O3 events

- Performance maintained despite detector evolution

Diverse Parameters:

- Effective across mass range (stellar BBH to IMBH)

- Robust to varying spin configurations

- Sky location and orientation independent

Different Detector Characteristics:

- Tested on both Hanford (H1) and Livingston (L1) data

- Adaptable to Virgo and KAGRA with minor retraining

- Indicates broad applicability to IGWON

Computational Efficiency

Inference Time:

- Processes data segments in seconds on GPU

- Suitable for low-latency and offline analysis

- Faster than some iterative denoising methods

Scalability:

- Parallelizable across time segments

- Efficient batch processing

- Feasible for continuous monitoring or reanalysis campaigns

Impact

Enhancing GW Data Quality

WaveFormer addresses a fundamental challenge in GW astronomy:

The Problem:

- LIGO/Virgo data contains complex, non-Gaussian noise

- Glitches can mimic or obscure genuine signals

- Traditional methods (e.g., gating) discard data, reducing sensitivity

This Solution:

- Intelligently suppresses noise while preserving signals

- Increases effective SNR for marginal events

- Improves parameter estimation accuracy

- Enhances science return from existing data

Applications Across GW Science

Detection:

- Improved IFAR enables detection of fainter sources

- Reduces false alarm rate, increasing catalog purity

- Complements traditional matched filtering

Parameter Estimation:

- Higher-quality waveforms improve parameter accuracy

- Better phase preservation enhances sky localization

- Reduced noise simplifies Bayesian inference

Tests of General Relativity:

- Cleaner signals enable more stringent consistency tests

- Residual analysis benefits from noise suppression

- Higher-order mode extraction facilitated

Stochastic Background Searches:

- Improved data quality enhances cross-correlation sensitivity

- Glitch removal reduces contamination

- Enables detection of fainter cosmological backgrounds

Advancing Transformer Applications in Physics

WaveFormer demonstrates transformer success beyond NLP/vision:

Lessons Learned:

- Self-attention captures long-range dependencies in physical signals

- Positional encoding essential for time series with phase information

- Science-driven design improves performance and interpretability

Influence on Other Domains:

- Template for applying transformers to other signal processing tasks

- Encourages transformer adoption in astronomy and physics

- Shows viability of large models for scientific data

Operational Implications for LIGO-Virgo-KAGRA

O4 and Future Runs:

- Potential integration into data quality pipelines

- Preprocessing step before parameter estimation

- Complementary to traditional data cleaning (gating, subtraction)

Next-Generation Detectors:

- Einstein Telescope, Cosmic Explorer will have more data

- Higher event rates necessitate efficient processing

- WaveFormer approach scalable to future needs

Reanalysis of Archival Data:

- Applying WaveFormer to O1, O2, O3 data may reveal new detections

- Improved parameters for marginal events

- Enhanced catalog quality for population studies

Multi-Messenger Astronomy

Improved GW data quality benefits joint observations:

Faster, More Accurate Localizations:

- Enables quicker EM follow-up

- Improved sky maps for telescope pointing

- Critical for catching early optical/gamma-ray emission

Lower-Mass Systems:

- Neutron star mergers typically lower SNR than BBH

- Denoising especially valuable for BNS and NSBH

- Enhanced multi-messenger science return

Methodological Contributions

WaveFormer provides:

- Open-source architecture adaptable to other detectors and signals

- Benchmark for future denoising methods

- Best practices for science-driven deep learning design

- Validation framework for evaluating GW data quality improvement

Resources

Publication Information

- Journal: Machine Learning: Science and Technology (IOP Publishing)

- DOI: 10.1088/2632-2153/ad2f54

- arXiv: 2212.14283

- Publication Date: March 1, 2024

- Featured Work: Highlighted by journal for significance

- Open Access: Check publisher for availability

Code and Data

- Potential Code Release: Check authors’ GitHub for implementation

- LIGO Open Science Center: GWOSC for training/testing data

- GWTC (Gravitational-Wave Transient Catalog): 75 BBH events used in validation

Gravitational Wave Background

LIGO-Virgo-KAGRA Collaboration:

- Advanced LIGO (Hanford and Livingston)

- Advanced Virgo (Italy)

- KAGRA (Japan)

Observing Runs:

- O1 (2015-2016), O2 (2016-2017), O3 (2019-2020)

- O4 (2023-2024 ongoing)

- Future runs with improved sensitivity

Data Quality:

- Review papers on LIGO data characteristics

- Glitch classification and mitigation strategies

- Detector characterization efforts

Transformer Architectures

Original Transformer:

- “Attention is All You Need” (Vaswani et al., 2017)

- Self-attention mechanism

- Applications in NLP

Transformers for Time Series:

- Adaptations for sequential data

- Positional encoding strategies

- Applications in forecasting, anomaly detection

Large Models in Science:

- Foundation models for scientific data

- Transfer learning in physics

- Scaling laws and model size trade-offs

GW Denoising Methods

Traditional Approaches:

- Matched filtering with vetoes

- Gating (removing glitchy segments)

- Noise subtraction (witnesses, auxiliary channels)

Machine Learning Methods:

- Autoencoders for GW denoising

- GANs for glitch removal

- Comparison studies

Hybrid Approaches:

- Combining ML with traditional methods

- Multi-stage pipelines

- Domain adaptation techniques

Parameter Estimation and Tests of GR

Bayesian Inference:

- MCMC (Markov Chain Monte Carlo)

- Nested sampling

- Impact of data quality on posteriors

Waveform Modeling:

- Post-Newtonian approximations

- Numerical relativity

- Surrogate models

GR Tests:

- Consistency checks (inspiral-merger-ringdown)

- Parameterized deviations from GR

- Role of data quality in test precision

Software and Tools

Deep Learning Frameworks:

- PyTorch or TensorFlow for implementation

- Transformer libraries (Hugging Face)

- GPU acceleration

GW Analysis Software:

- LALSuite: LIGO Algorithm Library

- PyCBC: Search and inference

- bilby: Bayesian inference

- GWpy: Data access and processing

Visualization:

- Q-transform plots

- Time-frequency spectrograms

- Waveform comparisons

Further Reading

Review Papers:

- Machine learning in gravitational wave astronomy

- Transformer models and their applications

- Data quality in GW detectors

Related Publications:

- Other ML denoising methods for GW

- Glitch classification with deep learning

- End-to-end deep learning pipelines for GW

Future Directions:

- Real-time denoising in low-latency pipelines

- Multi-detector denoising with transformers

- Scaling to next-generation detector data rates

- Transfer learning from ground to space-based detectors