Challenges in space-based gravitational wave data analysis and applications of artificial intelligence

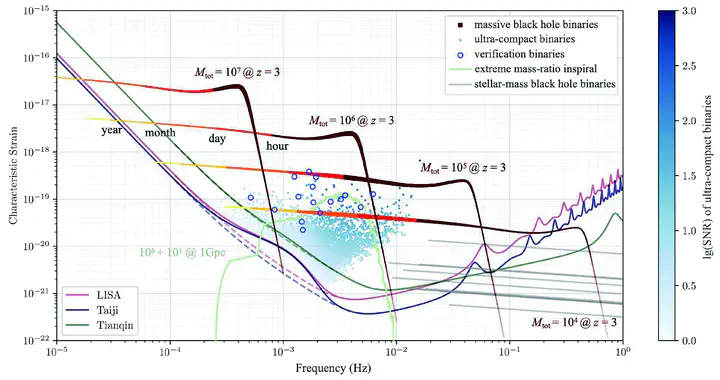

(Color online) The design sensitivity curves and primary target sources of the space-based gravitational wave detectors LISA, Taiji, and TianQin. The sensitivity of each detector is the total sensitivity of the first-generation Michelson-AET channel under the equal-arm-length approximation, expressed in terms of characteristic strain. The dashed curves represent the sensitivities resulting from instrumental noise (according to the noise requirements of LISA [11], Taiji [18], and TianQin [21]), while the solid curves represent the results after incorporating the galactic foreground noise.

(Color online) The design sensitivity curves and primary target sources of the space-based gravitational wave detectors LISA, Taiji, and TianQin. The sensitivity of each detector is the total sensitivity of the first-generation Michelson-AET channel under the equal-arm-length approximation, expressed in terms of characteristic strain. The dashed curves represent the sensitivities resulting from instrumental noise (according to the noise requirements of LISA [11], Taiji [18], and TianQin [21]), while the solid curves represent the results after incorporating the galactic foreground noise.Overview

This comprehensive review article provides a systematic examination of the unprecedented data analysis challenges facing space-based gravitational wave detection missions (LISA, Taiji, TianQin) and presents the transformative role artificial intelligence is playing in addressing these challenges. As the first major Chinese-language review on this topic, it serves as both a tutorial for newcomers and a comprehensive reference for researchers in the field.

Context and Motivation

The Dawn of Space-Based Gravitational Wave Astronomy

Following the spectacular success of ground-based detectors (LIGO, Virgo, KAGRA), the next frontier is space:

Three Major Space Missions:

- LISA (Laser Interferometer Space Antenna): ESA-NASA collaboration, launch ~mid-2030s

- Taiji: Chinese mission, similar timeline to LISA

- TianQin: Chinese mission focusing on lower frequencies

Scientific Targets:

- Massive black hole binaries (MBHBs): $10^4-10^7 M_\odot$

- Extreme mass ratio inspirals (EMRIs): stellar objects orbiting massive black holes

- Galactic compact binaries: millions of white dwarf binaries in our galaxy

- Stochastic gravitational wave background

- Unexpected sources and phenomena

Unprecedented Data Analysis Challenges

Space-based detection faces unique difficulties:

Signal Complexity:

- Thousands of overlapping sources simultaneously

- Signals lasting weeks to months

- Parameter spaces with 10-20 dimensions per source

- Non-stationary instrumental characteristics

Computational Demands:

- Global fitting of all sources together

- Bayesian inference in ultra-high dimensions

- Years of continuous data streams

- Real-time analysis requirements for alerts

Data Quality Issues:

- Instrumental glitches and artifacts

- Data gaps from various causes

- Time-varying noise characteristics

- Multiple data channels to combine

These challenges far exceed anything encountered in ground-based detection, necessitating fundamentally new approaches—where AI offers transformative solutions.

Scope and Structure

Comprehensive Coverage

The review systematically addresses:

Theoretical Foundations:

- Bayesian statistical inference framework

- Signal models and waveform templates

- Detector response and data characteristics

- Noise modeling and subtraction

Data Analysis Methodology:

- Likelihood function construction

- Sampling algorithms (MCMC, nested sampling, etc.)

- Global fitting strategies

- Computational optimization techniques

AI Applications:

- Machine learning for waveform modeling

- Neural networks for signal detection

- Deep learning for parameter estimation

- AI-driven noise characterization

- Automated anomaly detection

Target Audience

Written for:

- Graduate students entering the field

- Researchers transitioning from ground-based to space-based

- AI/ML scientists interested in gravitational wave applications

- Theoretical physicists seeking observational context

- Mission planners and data pipeline designers

Key Themes and Contributions

1. Bayesian Framework as Organizing Principle

The review uses Bayesian inference as the conceptual thread:

Fundamental Elements:

- Prior: Astrophysical population models and parameter bounds

- Likelihood: Relation between parameters and observed data

- Posterior: Inferred parameter distributions given observations

- Evidence: Model comparison and selection

Computational Challenges:

- High-dimensional parameter spaces

- Multimodal posteriors from parameter degeneracies

- Expensive likelihood evaluations

- Evidence computation for model selection

AI Connections:

- Neural networks for fast likelihood approximation

- Normalizing flows for posterior sampling

- Machine learning for proposal distributions

- Amortized inference across source populations

2. Waveform Modeling Landscape

Comprehensive treatment of gravitational wave signal models:

Theoretical Approaches:

- Numerical Relativity: Full Einstein equations solved numerically

- Post-Newtonian: Perturbative expansion in orbital velocity

- Effective-One-Body: Resummation of PN series with NR calibration

- Phenomenological: Data-driven interpolation models

AI-Enhanced Modeling:

- Neural networks learning waveforms from NR simulations

- Gaussian processes interpolating in parameter space

- Surrogate models for fast evaluation

- Reduced-order modeling with ML compression

Trade-offs:

- Accuracy vs. computational cost

- Physical fidelity vs. speed

- Domain of validity vs. generality

3. Detector Response and Data Model

Detailed discussion of space-based detector characteristics:

LISA/Taiji/TianQin Configurations:

- Triangular constellation of three spacecraft

- Millions of kilometers arm lengths

- Laser interferometry between spacecraft

- Multiple data channels (A, E, T combinations)

Response Function:

- Time-dependent due to orbital motion

- Sky-location and polarization dependence

- Doppler modulation

- Antenna pattern functions

Noise Sources:

- Instrumental Noise: Laser frequency fluctuations, proof mass acceleration noise

- Confusion Noise: Unresolved galactic binaries forming stochastic foreground

- Glitches: Transient artifacts

- Gaps: Data downlink, instrumental issues

Data Combination Strategies:

- Time-delay interferometry (TDI) to cancel laser noise

- Optimal data channel combinations

- Multi-channel analysis benefits

4. Likelihood Function Construction

Central challenge in Bayesian inference:

Standard Form: $$\mathcal{L}(\theta | d) \propto \exp\left(-\frac{1}{2}\langle d - h(\theta) | d - h(\theta) \rangle\right)$$

Where:

- $d$: observed data

- $h(\theta)$: signal template with parameters $\theta$

- $\langle \cdot | \cdot \rangle$: noise-weighted inner product

Complications in Space-Based Case:

- Overlapping signals: $d = \sum_i h_i(\theta_i) + n$

- Non-Gaussian noise from glitches

- Time-varying noise PSD

- Computationally expensive template generation

AI Solutions:

- Fast neural surrogate likelihoods

- Learned noise characteristics

- Implicit likelihood inference

- Simulation-based inference techniques

5. Sampling Strategies

Survey of algorithms for exploring posterior distributions:

Markov Chain Monte Carlo (MCMC):

- Metropolis-Hastings algorithm

- Hamiltonian Monte Carlo for gradient utilization

- Parallel tempering for multimodality

- Adaptive proposals

Nested Sampling:

- Evidence computation alongside parameter estimation

- Efficient for multimodal posteriors

- Dynamic nested sampling variants

- Parallelization challenges

Modern Innovations:

- Normalizing Flows: Learned bijective transformations for efficient sampling

- Variational Inference: Optimization-based approximate posterior

- Neural Posterior Estimation: Direct neural network posterior approximation

- Simulation-Based Inference: Bypasses explicit likelihood evaluation

AI Enhancements:

- Learned proposal distributions

- Neural network surrogate models for fast evaluation

- Adaptive sampling guided by ML

- Amortized inference across many events

6. Global Fitting Problem

Unique to space-based detection:

Challenge:

- Thousands of sources overlap in data

- Must fit all simultaneously

- Parameter correlations across sources

- Combinatorial explosion of possibilities

Strategies:

- Reversible-jump MCMC for varying number of sources

- Trans-dimensional sampling

- Hierarchical modeling

- Iterative source subtraction

AI Approaches:

- Neural networks for source detection and counting

- Deep learning for source separation

- Reinforcement learning for search strategies

- Attention mechanisms for multi-source modeling

7. AI for Waveform Modeling

Detailed examination of ML approaches to signal generation:

Generative Models:

- Variational Autoencoders (VAEs) for waveform compression

- Generative Adversarial Networks (GANs) for sample generation

- Conditional normalizing flows for parameter-to-waveform mapping

Surrogate Modeling:

- Neural networks approximating expensive waveforms

- Gaussian processes for uncertainty quantification

- Reduced-basis methods with ML-selected bases

- Multi-fidelity modeling

Benefits:

- Orders-of-magnitude speedup in likelihood evaluation

- Enable otherwise infeasible analyses

- Continuous coverage of parameter space

- Uncertainty quantification

Challenges:

- Validation against ground truth

- Accuracy requirements for scientific inference

- Generalization beyond training domain

- Systematic error control

8. AI for Noise and Data Quality

Machine learning transforming data preprocessing:

Noise Characterization:

- Non-stationary noise PSD estimation

- Anomaly detection in noise properties

- Glitch classification and removal

- Data gap handling

Glitch Mitigation:

- Supervised learning for glitch identification

- Unsupervised clustering of glitch types

- Inpainting missing data

- Robust statistics for contaminated data

Quality Assessment:

- Automated data validation

- Real-time monitoring

- Predictive maintenance for instruments

- Confidence estimation for segments

9. AI for Signal Detection

Deep learning revolutionizing search pipelines:

Detection Architectures:

- Convolutional neural networks for time series

- Recurrent networks for temporal sequences

- Attention mechanisms for long-range dependencies

- Multi-scale architectures

Advantages:

- Real-time or faster-than-real-time processing

- Template-free detection of unexpected signals

- Handling of overlapping sources

- Automatic feature learning

Applications:

- MBHB rapid detection for multi-messenger alerts

- EMRI identification in confusion noise

- Extreme event discovery

- Triggered searches around external events

10. AI for Parameter Estimation

Neural approaches to inference:

Direct Regression:

- End-to-end networks: data → parameters

- Fast point estimates

- Uncertainty quantification challenges

Posterior Estimation:

- Conditional normalizing flows: bijective mapping to simple distributions

- Mixture density networks: flexible posterior families

- Neural posterior estimation: simulation-based inference

- Bayesian neural networks: uncertainty in network itself

Advantages:

- Amortization: train once, infer many times instantly

- Avoids MCMC for each new event

- Natural parallelization

- Enables population studies

Considerations:

- Training data requirements

- Generalization to distribution tails

- Systematic errors

- Validation strategies

11. Novel AI Techniques Highlighted

Cutting-edge methods:

Normalizing Flows:

- Detailed technical exposition

- Applications to GW inference

- Recent architectures (coupling layers, neural spline flows)

- Integration with sampling algorithms

Simulation-Based Inference (SBI):

- Likelihood-free inference

- Neural density estimation

- Sequential neural posterior estimation

- Applications when likelihood intractable

Transfer Learning:

- Pre-training on simulations

- Fine-tuning on real data

- Domain adaptation

- Multi-task learning

Physics-Informed Neural Networks:

- Incorporating Einstein equations

- Waveform consistency constraints

- Conservation laws

- Improved extrapolation

Practical Guidance

Software Ecosystems

Review discusses key tools:

Waveform Generation:

LALSuite: LIGO Algorithm LibraryPhenomD/PhenomPv2: Phenomenological models- Various NR waveform catalogs

Inference Frameworks:

bilby: Bayesian inference libraryPyCBC: Search and parameter estimationLISACode/LISA Orbits: LISA-specific tools

AI Libraries:

PyTorch/TensorFlow: Deep learning frameworksnflows/normflows: Normalizing flow implementationssbi: Simulation-based inference toolkit- Custom domain-specific packages

Computational Resources

Infrastructure considerations:

Training Requirements:

- GPU clusters for neural network training

- Large-scale waveform simulation campaigns

- Data storage and management

- Parallelization strategies

Inference Deployment:

- Real-time processing constraints

- Cloud vs. HPC vs. on-premises

- Scalability to operational data rates

- Cost-benefit analyses

Critical Evaluation

AI Advantages

The review honestly assesses benefits:

- Speed: Orders of magnitude faster than traditional methods

- Flexibility: Learns from data, adapts to complexities

- Scalability: Handles high-dimensional problems

- Discovery: Potential for unexpected signal detection

AI Limitations

Also acknowledges challenges:

- Validation: Ensuring reliability for scientific inference

- Generalization: Performance on out-of-distribution data

- Interpretability: Understanding what networks learn

- Systematic Errors: Bias from training data or architecture

- Data Requirements: Need extensive simulations

Complementarity Perspective

Emphasizes synergy with traditional methods:

- Hybrid Pipelines: AI for screening, matched filtering for confirmation

- Mutual Validation: Cross-checks between approaches

- Specialized Roles: AI for detection speed, MCMC for posterior exploration

- Continual Improvement: Each method informs the other

Future Outlook

Near-Term (Before Launch)

Pre-mission developments:

- Refined AI architectures for data challenges

- Comprehensive validation studies

- End-to-end pipeline demonstrations

- Mission requirement refinement

Medium-Term (Early Operations)

Initial data analysis:

- Deployment of operational pipelines

- Refinement based on real data

- Rapid multi-messenger alerts

- First scientific discoveries

Long-Term Vision

Mature field:

- AI-human collaboration in discovery

- Automated science from GW data

- Integration across astronomy

- New paradigms from unexpected signals

Significance and Impact

For Chinese Gravitational Wave Community

Particularly important because:

- First Major Review: Comprehensive Chinese-language resource

- Supports Taiji/TianQin: Directly relevant to Chinese missions

- Educational Resource: Training next generation

- Research Roadmap: Guides future investigations

For International Community

Broader contributions:

- Comprehensive Synthesis: Pulls together scattered literature

- Bayesian Framework: Unified perspective on diverse methods

- AI Integration: How AI fits into established workflows

- Practical Guide: Actionable advice for practitioners

For AI in Science

Exemplifies:

- High-Stakes Application: Where reliability is paramount

- Domain Knowledge Integration: Physics-informed ML

- Validation Standards: Rigorous testing requirements

- Interdisciplinary Collaboration: Physicists and computer scientists

Related Work

The review connects to:

- Ground-based GW detection AI applications

- Astronomy big data challenges

- Bayesian inference methodology

- Scientific machine learning

Resources for Readers

The paper points to:

- Public datasets (LISA Data Challenges)

- Open-source software packages

- Educational materials and tutorials

- Active research collaborations

Conclusion

This comprehensive review establishes a foundation for understanding and advancing the critical role of artificial intelligence in space-based gravitational wave astronomy. By systematically covering challenges, methods, applications, and future directions within a coherent Bayesian framework, it serves as both an essential introduction for newcomers and a valuable reference for active researchers.

The work demonstrates that AI is not merely a convenience but a necessity for realizing the full scientific potential of missions like LISA, Taiji, and TianQin. As these missions approach launch, the synergy between advanced statistical methods, high-performance computing, and artificial intelligence will be essential for transforming raw data into profound insights about the universe.

For the gravitational wave community, this review charts a course through the complex landscape of data analysis challenges toward the exciting discoveries that await in the coming era of space-based gravitational wave astronomy.